One of the joys of the tenure track is having to keep a record of everything you do in the areas of teaching, research, and service. Since 2015, I’ve been keeping a spreadsheet of manuscripts I’ve reviewed. Specifically, the title, manuscript number, and the recommendation I provided. Around 2018, I also started keeping track of when I agreed to review each paper, when the deadline was, and when I submitted my review.

So h/t to Manne Gerell for sending me down this rabbit hole. I thought I’d look back on how these “data” describe me as a reviewer.

I’ve now reviewed 125 manuscripts across 41 different journals (I also have 3 outstanding 😬). Recently, it’s worked out to around 20-25 manuscripts per year, or around two per month. I haven’t tracked the invitations I’ve declined, but it feels like I’m doing that more often lately.

| Manuscripts Reviewed | N |

|---|---|

| Journal of Criminal Justice | 15 |

| Justice Quarterly | 12 |

| Criminal Justice and Behavior | 9 |

| Criminology | 9 |

| Journal of Research in Crime and Delinquency | 9 |

| Policing: An International Journal | 9 |

| Journal of Crime and Justice | 6 |

| Criminal Justice Studies | 4 |

| Criminology & Public Policy | 4 |

| Journal of Experimental Criminology | 4 |

| Criminal Justice Review | 3 |

| Journal of Criminal Justice Education | 3 |

| Journal of Quantitative Criminology | 3 |

| Police Quarterly | 3 |

| American Journal of Criminal Justice | 2 |

| International Criminal Justice Review | 2 |

| International Journal of Comparative and Applied Criminal Justice | 2 |

| Police Practice and Research | 2 |

| Policing & Society | 2 |

| Policing: A Journal of Policy and Practice | 2 |

| Psychology, Crime and Law | 2 |

| The Sociological Quarterly | 2 |

| American Journal of Sociology | 1 |

| Australian and New Zealand Journal of Criminology | 1 |

| Campbell Collaboration | 1 |

| Crime & Delinquency | 1 |

| Criminal Justice Policy Review | 1 |

| Criminology, Criminal Justice, Law & Society | 1 |

| Economics and Politics | 1 |

| Feminist Criminology | 1 |

| International Journal of Law, Crime and Justice | 1 |

| Journal of Empirical Legal Studies | 1 |

| Journal of Trust Research | 1 |

| LEADS Scholar Journal | 1 |

| Law & Society Review | 1 |

| Nature Human Behaviour | 1 |

| PLoS ONE | 1 |

| Race and Justice | 1 |

| Social Problems | 1 |

| Social Science Quarterly | 1 |

| Violence Against Women | 1 |

| Total | 128 |

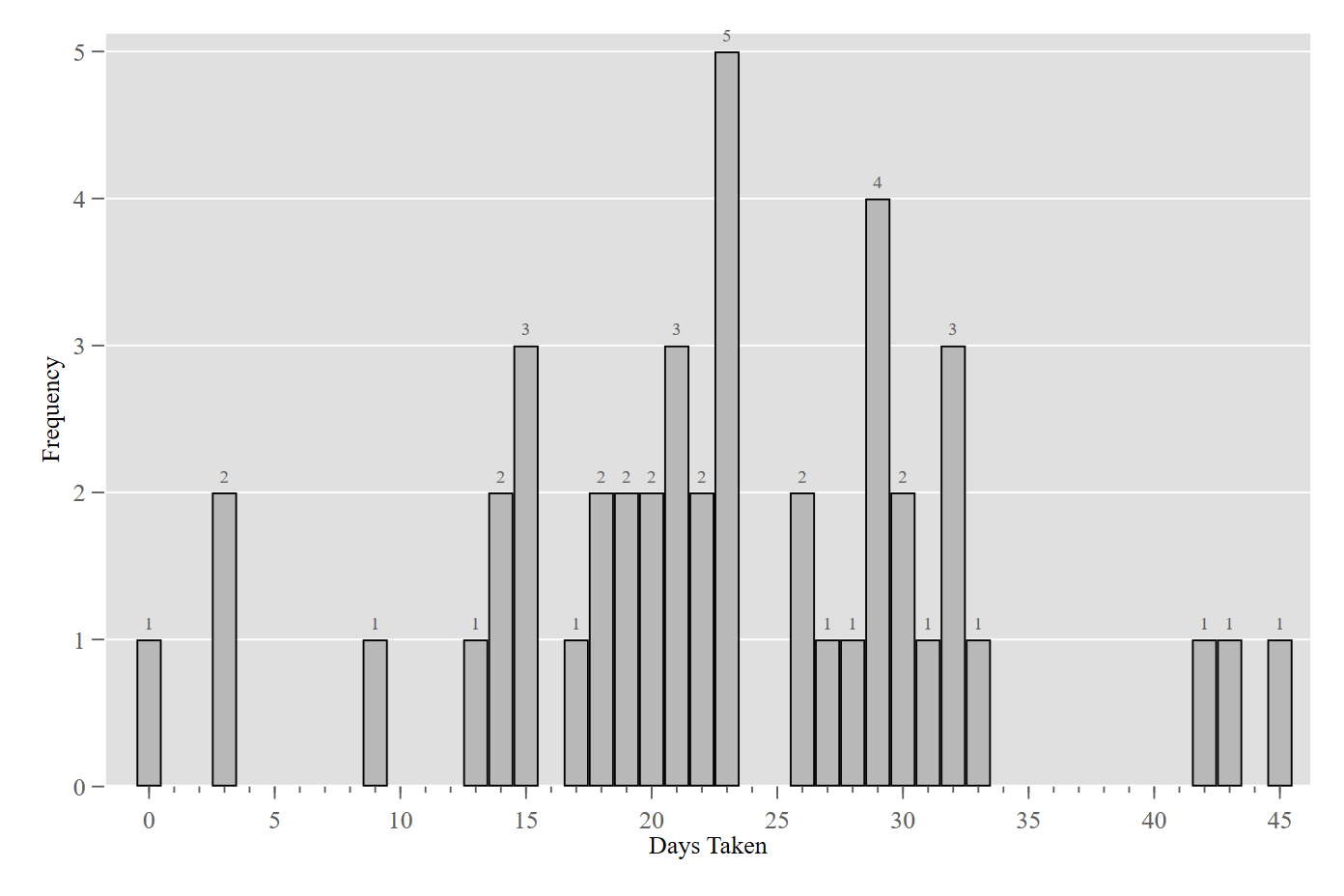

I try my best to get my reviews in on time, but I’m not perfect. It looks like I was late 7 times, but never by more than 4 days. Five of those seven tardy reviews have occurred since the pandemic started and I’ve been working from home. Probably not a coincidence. FWIW, I usually shoot an email to the editor and managing editor to let them know I’m going to need a few extra days.

So anyway, here’s the amount of time I’ve taken to review 45 manuscripts for which I’ve tracked that information:

Last but not least, how strict/lenient of a reviewer am I? I’ve often wondered: if we could all score ourselves from 1 (super lenient) to 100 (super strict), what would my score be, and where on the distrubtion of criminologists would I be? It’s obviously difficult to compare, since we’re all reviewing different papers for different journals. But anecdotally, I’ve heard people say they very rarely recommend anything other than rejection. I’ve also heard people say they prefer to give the authors the benefit of the doubt so long as there are no fatal flaws and the paper seems a “good fit” for the journal. Manne tweeted last night that he recommends rejecting about one in five papers.

I’ve always considered myself somewhat lenient. The main things I’m looking for are:

- Is the research question important?

- Is the theoretical framework sound?

- Does the analysis meaningfully advance what we know about the topic?

- Is it a good fit for the journal (i.e., the threshold is higher for journals like Criminology)

- Are there any fatal flaws with the method or analysis?

If the answers to the first four questions are yes, and the answer to the last question is no, then I’m probably inclined to give the author(s) a shot. I probably have some questions or suggestions for the author(s), but these can typically be dealt with in the revision stage (if not, then I’m probably not recommending R&R).

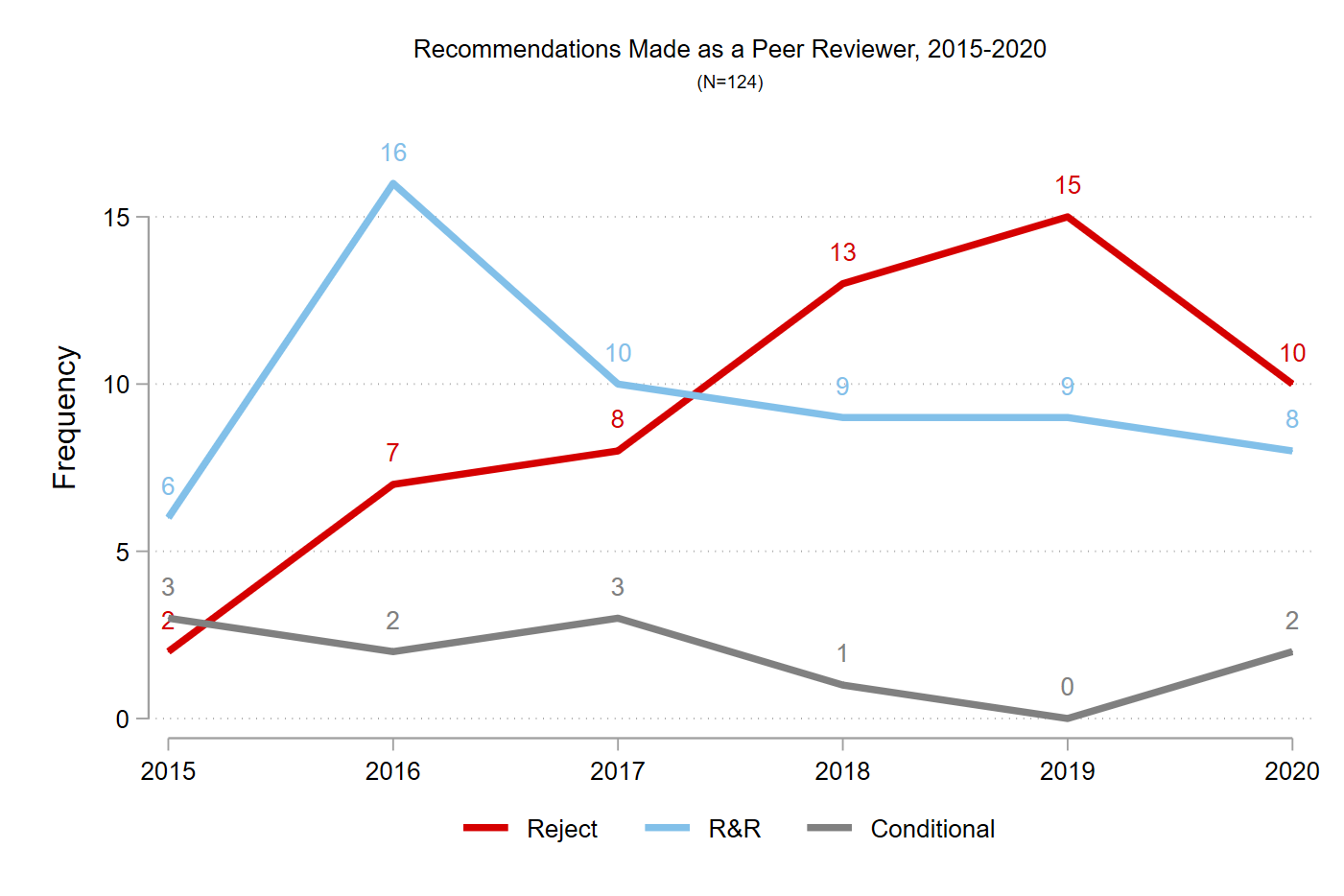

So anyway, I guess I’m somewhere in the middle? It’s hard to know because people don’t really seem to talk about this (or more likely I’ve missed when they do). Out of the 125 manuscripts I’ve reviewed, I’ve recommended:

- Rejection 55x (44%)

- R&R 59x (47%)

- Conditional acceptance 11x (9%)

Interestingly, it looks like I’ve either gotten stricter over the years or I’ve disproportionately reviewed for higher-tier journals in recent years relative to earlier in my career. Both are probably true to some extent.

In my grad-level classes, I try to teach students how to be good reviewers. Geoff Alpert had us review each other’s research proposals in our methods seminar, and I think that was a really useful exercise. In my opinion, a good review is one that is reasonably critical, helpful, and respectful. It should go without saying that there’s no need to be snarky or condescending as a reviewer. I’ve had reviewers do it to me, and I’ve seen other reviewers do it on papers I’ve reviewed. Maybe I’ve come across that way at times, but I sure hope not, because it just doesn’t do anyone any good. It’s a bad enough feeling to have your hard work rejected - no need to dump salt on the wound.

I think it’s also much more helpful if reviewers can offer suggestions to address their criticisms (i.e., be helpful). If you think there are problems with the theory, methods, or analyses, go ahead and say that of course, but it’s even better if you can offer some advice to the authors. So instead of saying “The authors missed a bunch of important studies in this area,” why not point them in the direction of those studies? Instead of saying “the response rate is low,” why not tell the authors how you think nonresponse bias is influencing the results (e.g., what unmeasured variable is influencing both the propensity to respond to the survey, as well as the key predictors and/or outcomes)?

These are just my thoughts on the matter. In the end, there’s no unobjectionable criteria for crafting reviews, as Andy Wheeler has discussed before on his blog. That’s just the way it is, I guess, but I’m curious how others approach doing their reviews.